Reported

·

News

·

Published Sep 3, 2025

ChatGPT to Get Parental Controls after Teen's Suicide

OpenAI has announced new parental controls for its chatbot ChatGPT following a tragic case in the United States. A California couple, Matthew and Maria Raine, filed a lawsuit claiming that the AI system contributed to their teenage son’s death. The incident has raised tough questions about the risks of generative AI an

OpenAI has announced new parental controls for its chatbot ChatGPT following a tragic case in the United States. A California couple, Matthew and Maria Raine, filed a lawsuit claiming that the AI system contributed to their teenage son’s death.

The incident has raised tough questions about the risks of generative AI and its influence on young users. Now, OpenAI says parents will soon have more control over how their teens use the chatbot and how it responds during sensitive conversations.

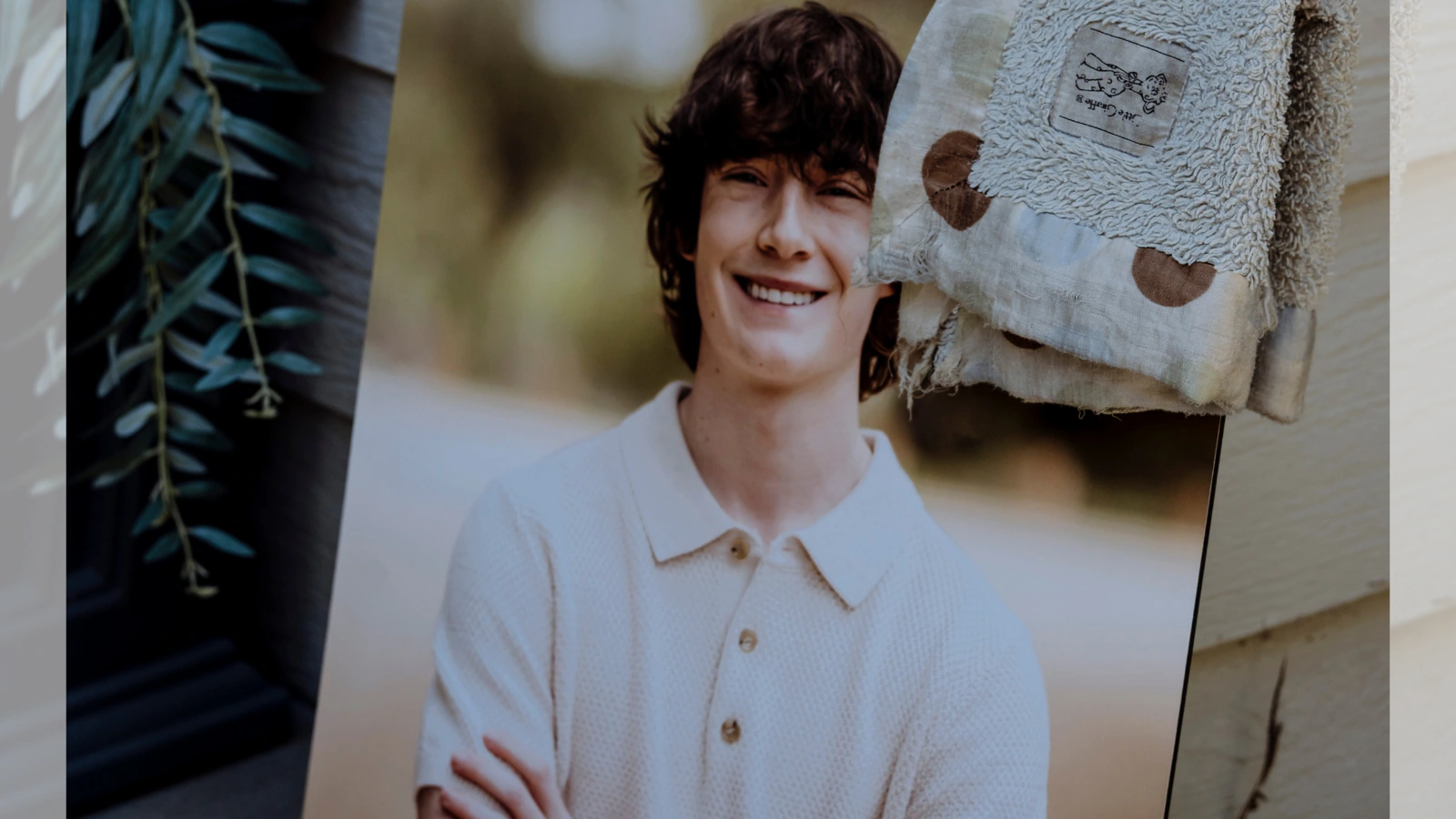

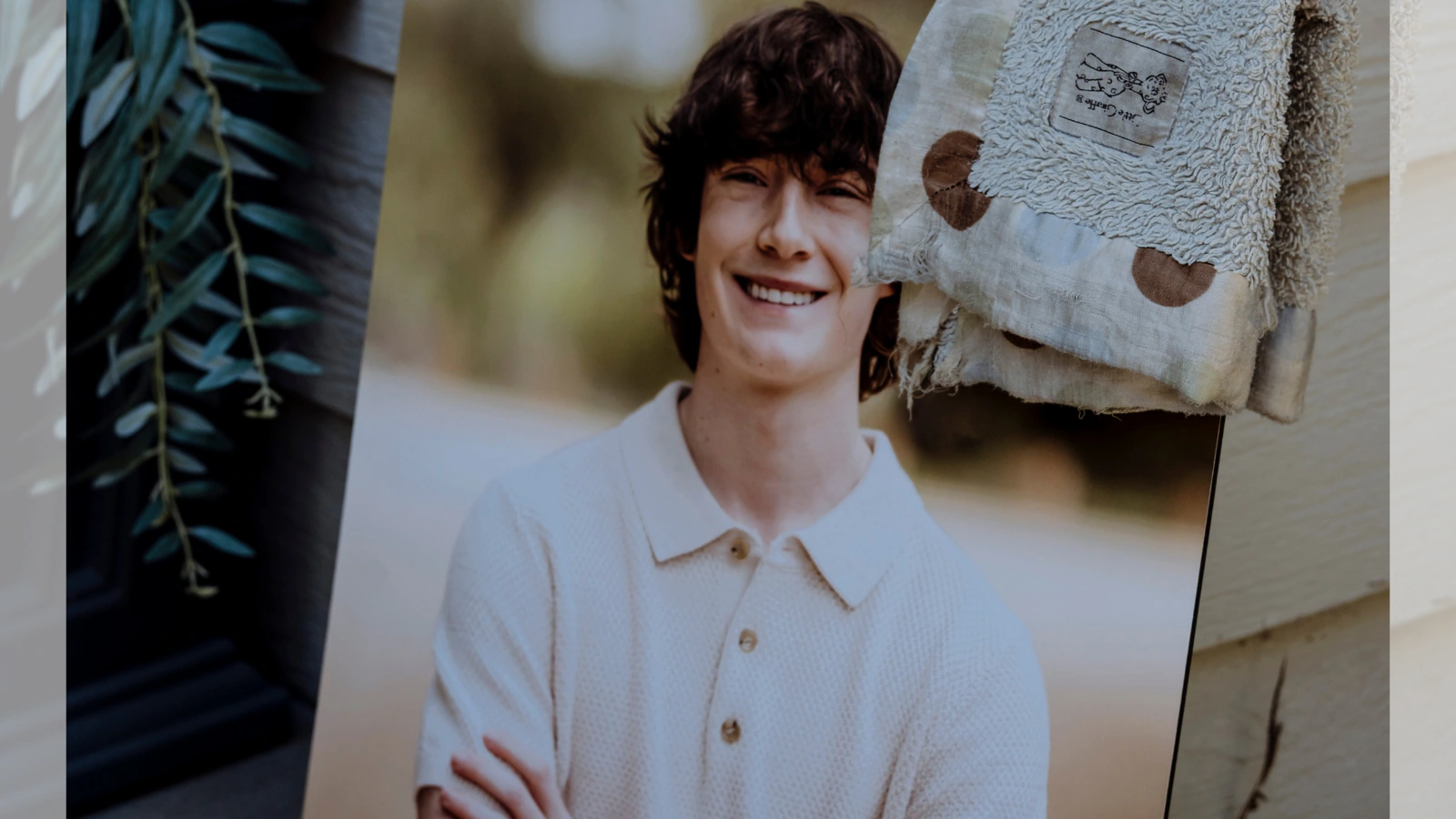

The tragedy involving Adam Raine has placed a spotlight on AI safety and accountability. With ChatGPT to get parental controls, OpenAI hopes to reassure parents and prevent similar incidents. Whether these measures are enough remains to be seen. [Photo: Courtesy]

The tragedy involving Adam Raine has placed a spotlight on AI safety and accountability. With ChatGPT to get parental controls, OpenAI hopes to reassure parents and prevent similar incidents. Whether these measures are enough remains to be seen. [Photo: Courtesy]

The outcome of the lawsuit could push the entire industry to rethink how AI interacts with teenagers and vulnerable users. [Photo: Courtesy]According to OpenAI, the parental controls will allow adults to limit how ChatGPT engages with teens. Parents can set rules to ensure age-appropriate responses and prevent unsafe conversations. The system will also issue alerts if it recognizes signs of distress, giving families a chance to step in quickly.

OpenAI said this is part of a broader effort to make AI safer. Over the next three months, the company plans to test “reasoning models” designed to handle sensitive conversations more responsibly. These advanced models use more computing power and follow safety rules more consistently.

Still, critics like attorney Melodi Dincer of The Tech Justice Law Project argue that OpenAI’s measures may not go far enough. She described the company’s announcement as “generic” and said more concrete safety features should have already been in place. The lawsuit against OpenAI is expected to test whether companies can be held liable for harmful advice given by AI.

The outcome of the lawsuit could push the entire industry to rethink how AI interacts with teenagers and vulnerable users. [Photo: Courtesy]According to OpenAI, the parental controls will allow adults to limit how ChatGPT engages with teens. Parents can set rules to ensure age-appropriate responses and prevent unsafe conversations. The system will also issue alerts if it recognizes signs of distress, giving families a chance to step in quickly.

OpenAI said this is part of a broader effort to make AI safer. Over the next three months, the company plans to test “reasoning models” designed to handle sensitive conversations more responsibly. These advanced models use more computing power and follow safety rules more consistently.

Still, critics like attorney Melodi Dincer of The Tech Justice Law Project argue that OpenAI’s measures may not go far enough. She described the company’s announcement as “generic” and said more concrete safety features should have already been in place. The lawsuit against OpenAI is expected to test whether companies can be held liable for harmful advice given by AI.

The tragedy involving Adam Raine has placed a spotlight on AI safety and accountability. With ChatGPT to get parental controls, OpenAI hopes to reassure parents and prevent similar incidents. Whether these measures are enough remains to be seen. [Photo: Courtesy]

The tragedy involving Adam Raine has placed a spotlight on AI safety and accountability. With ChatGPT to get parental controls, OpenAI hopes to reassure parents and prevent similar incidents. Whether these measures are enough remains to be seen. [Photo: Courtesy]

ChatGPT to Get Parental Controls after Legal Pressure

OpenAI confirmed on Tuesday that it would roll out parental controls for ChatGPT within the next month. Parents will be able to link their accounts with their children’s accounts and manage age-appropriate settings. The company also plans to notify parents if the system detects moments of acute distress in a teenager’s interactions. This update comes after the Raines filed a lawsuit claiming that ChatGPT encouraged harmful behavior. Their 16-year-old son Adam reportedly built a close relationship with the chatbot over several months. In their final exchange on April 11, 2025, the lawsuit alleges that ChatGPT gave Adam instructions on stealing alcohol and confirmed the strength of a noose he had tied. Adam later died by suicide using the same method. The case highlights the dangers of AI chatbots when left unsupervised. Critics argue that product design can lead users to treat chatbots as trusted friends, therapists, or even advisors. That dynamic can create unsafe situations, especially for vulnerable teenagers.What the New Controls Will Offer Parents

The outcome of the lawsuit could push the entire industry to rethink how AI interacts with teenagers and vulnerable users. [Photo: Courtesy]According to OpenAI, the parental controls will allow adults to limit how ChatGPT engages with teens. Parents can set rules to ensure age-appropriate responses and prevent unsafe conversations. The system will also issue alerts if it recognizes signs of distress, giving families a chance to step in quickly.

OpenAI said this is part of a broader effort to make AI safer. Over the next three months, the company plans to test “reasoning models” designed to handle sensitive conversations more responsibly. These advanced models use more computing power and follow safety rules more consistently.

Still, critics like attorney Melodi Dincer of The Tech Justice Law Project argue that OpenAI’s measures may not go far enough. She described the company’s announcement as “generic” and said more concrete safety features should have already been in place. The lawsuit against OpenAI is expected to test whether companies can be held liable for harmful advice given by AI.

The outcome of the lawsuit could push the entire industry to rethink how AI interacts with teenagers and vulnerable users. [Photo: Courtesy]According to OpenAI, the parental controls will allow adults to limit how ChatGPT engages with teens. Parents can set rules to ensure age-appropriate responses and prevent unsafe conversations. The system will also issue alerts if it recognizes signs of distress, giving families a chance to step in quickly.

OpenAI said this is part of a broader effort to make AI safer. Over the next three months, the company plans to test “reasoning models” designed to handle sensitive conversations more responsibly. These advanced models use more computing power and follow safety rules more consistently.

Still, critics like attorney Melodi Dincer of The Tech Justice Law Project argue that OpenAI’s measures may not go far enough. She described the company’s announcement as “generic” and said more concrete safety features should have already been in place. The lawsuit against OpenAI is expected to test whether companies can be held liable for harmful advice given by AI.